Every day, thousands of tweets, blog posts, and announcements flood the tech ecosystem. Most of it is noise — recycled takes, engagement bait, and press releases disguised as news. We built a system to find the 1% that actually matters to builders.

This is the story of how nextbig.dev's AI-powered curation pipeline works, what we got wrong, and what we learned building a system that reads the internet so you don't have to.

The Problem with Tech News

If you're a builder — someone actually shipping products — your relationship with tech news is complicated. You need to stay informed. You can't afford to miss a major API change, a funding shift that affects your market, or a security vulnerability in your stack. But you also can't spend two hours a day scrolling X and Hacker News.

The existing solutions weren't great:

- Algorithmic feeds (X, LinkedIn) optimize for engagement, not relevance. You get outrage and hot takes, not signal.

- Newsletters are written by humans with opinions and blind spots. Great for perspective, bad for comprehensive coverage.

- RSS readers give you everything from your sources, with no ranking or filtering. Firehose, not filter.

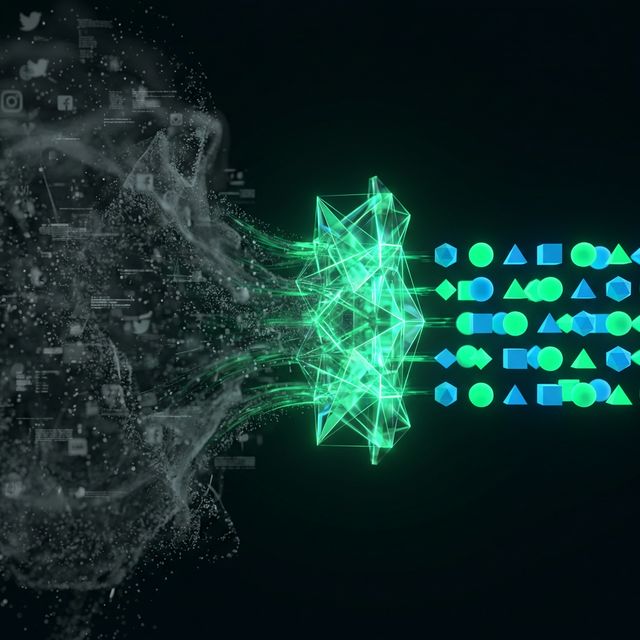

We wanted something different: a system that combines the breadth of algorithmic aggregation with the judgment of a well-informed editor — but runs 24/7 without getting tired or developing biases toward clickbait.

How the Pipeline Works

Source Selection: The 186

Everything starts with source curation. We manually vetted 186 X accounts across six tiers, from tier-1 publications (TechCrunch, The Verge, Wired) down to tier-6 niche builders and hardware accounts. Each tier has different fetch frequencies — the top sources are checked every cycle, while lower tiers rotate to manage API costs.

This is the most human-intensive part of the system, and deliberately so. Garbage in, garbage out. No amount of AI scoring can compensate for following the wrong people.

The Fetch Cycle

Three times daily — 7 AM, noon, and 6 PM UTC — our scheduler fires. It pulls recent tweets from each tier, extracts any linked URLs, and resolves them to their final destinations. We filter out known non-article domains (shopping sites, social media, media platforms) because a tweet linking to a YouTube video isn't a news article.

AI Scoring: The Judgment Layer

This is where it gets interesting. Every candidate article gets scored by Claude Haiku on a 1-10 scale across multiple dimensions:

- Relevance: Is this actually about technology, AI, or the builder ecosystem?

- Substance: Does it contain real information, or is it just an opinion/reaction?

- Timeliness: Is this breaking news, or a rehash of something from last week?

- Builder impact: Would this change how a builder thinks about their work?

Articles scoring below 6 get filtered out. The rest get categorized into our five buckets: AI, Dev, Startups, Security, and Tech.

Freshness-Weighted Ranking

Raw engagement numbers are misleading. A tweet from Elon Musk will always out-engage a detailed technical breakdown from a security researcher. But the security breakdown might be far more valuable to our audience.

Our ranking formula balances engagement with recency: score = engagement / (hours_old + 2)^1.5. This means a moderately-engaged recent article can outrank a heavily-engaged older one. Fresh signal beats stale virality.

The Daily Briefing: AI as Editor

Every morning at 6 AM UTC, our most ambitious agent kicks in. Claude Opus takes the previous 24 hours of scored, categorized articles and writes a complete editorial briefing:

- Hero story: The single most significant development, with 2-3 paragraphs of original analysis — not just a summary, but context on why it matters.

- Section roundups: Stories grouped by category, each with a concise summary that captures the key takeaway.

- Quick hits: Lower-priority items condensed to one-liners for scanning.

- Takeaway: An editorial observation that connects the day's themes — the kind of insight you'd get from a thoughtful colleague, not a RSS aggregator.

We then generate a hero image via DALL-E and a two-host audio version using Deepgram's text-to-speech, creating a podcast-style briefing with distinct voices.

What We Got Wrong

Building this taught us some uncomfortable lessons:

AI scoring has blind spots. Early versions over-indexed on AI news and under-indexed on security stories. The model had a subtle bias toward topics it "understood" better. We fixed this by adding category-specific scoring criteria and minimum category quotas.

Context windows aren't infinite. Our first briefing generator tried to send every article to Opus. At 50+ articles with full descriptions, we hit context limits and got truncated output. We now cap input at the top 35 articles by score and send concise metadata, not full text.

Engagement isn't quality. We initially weighted raw engagement too heavily. The result was a feed dominated by drama and dunks. The freshness-weighted formula was our solution, but it took three iterations to get right.

The Philosophy

The hardest part of building this system wasn't the engineering — it was deciding what "good curation" means. We landed on a simple principle: if a builder reads one thing today, would this be worth their time?

AI doesn't replace editorial judgment. It extends it. Our 186 source accounts represent a human judgment about who's worth listening to. The scoring criteria represent a human judgment about what "relevance" means. The AI just applies those judgments at a scale no human editor could sustain.

That's the real lesson: the best AI systems don't remove humans from the loop. They amplify human judgment to superhuman scale.